2023.05.24 (WED) 학습 정리

#Pyenv #Airflow #AWS #S3

1. pyenv

프로젝트/파이프라인 환경 별로 파이썬 버전이 다르고 데이터 엔지니어는 여러 버전의 파이썬을 개발환경에 설치하여 개발/테스트 작업을 수행 해야하므로 pyenv를 활용하여 디렉토리별로 원하는 버젼으로 격리된 환경을 가능하게 함

- pyenv 설치

curl https://pyenv.run | bash- zshrc 설정 - vi ~/.zshrc 파일에 아래 내용 추가

export PYENV_ROOT="$HOME/.pyenv"

command -v pyenv >/dev/null || export PATH="$PYENV_ROOT/bin:$PATH"

eval "$(pyenv init -)"

eval "$(pyenv virtualenv-init -)"- 설치 확인

source ~/.zshrc #재시작

pyenv versions- 설치가능한 파이썬 version 확인

pyenv install --list

pyenv install --list | grep "3.7.1" #3.7.1 version만 확인- 특정 버전의 파이썬 설치

pyenv install 3.7.16- 기본 설정 파이썬 버전 변경

pyenv global 3.7.16- 파이썬 개발환경 생성

pyenv virtualenv 3.7.16 pd24air # 개발환경 생성

pyenv global pd24air # 생성된 파이썬 개발환경을 기본으로 사용2. Aws Hadoop System S3

Amazon Simple Storage Service는 인터넷용 스토리지 서비스

2-1. Basic

* 위에서 생성한 pd24air 환경에서 진행

- awscli 설치

pip install awscli

aws configure #접근 권한 설정 ...- aws cli

aws s3 ls #목록 조회

aws s3 rm s3://mybucket #삭제

aws s3 mv sub.log s3://mybucket #이동

aws s3 cp sub.log s3://mybucket/sub/ #Local → #폴더가 없을 경우 폴더를 생성 후 복사2-2. airflow를 활용하여 AWS에 파일 전송하기

- airflow container에 awscli 설치 必

# 구동 컨테이너들의 상태 확인

docker ps

docker exec -it [worker 컨테이너 ID] bash

# 컨테이너 내부에서 awscli 설치

pip install awscli

aws configure #접근 권한 설정 ...- airflow/dags 생성

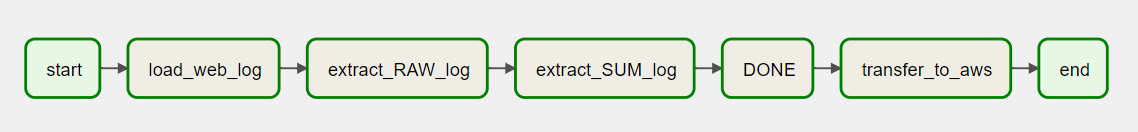

📌 'Transfer to AWS' Dag Code

from airflow import DAG

from datetime import datetime, timedelta

from airflow.operators.bash import BashOperator

from airflow.operators.empty import EmptyOperator

MY_PATH = '/opt/airflow/dags/data'

default_args = {

'owner': 'airflow',

'depends_on_past': False,

'start_date': datetime(2023, 5, 1),

'retries': 0,

}

test_dag = DAG(

'mario-sub-aws',

default_args=default_args,

schedule_interval=timedelta(days=1)

)

# Define the BashOperator task

load_web_log = BashOperator(

task_id = 'load_web_log',

bash_command=f"aws s3 cp s3:/log/web.log {MY_PATH}/web.log",

dag=test_dag

)

bash_extract_raw = BashOperator(

task_id='extract_RAW_log',

bash_command=f"cat {MY_PATH}/web.log | grep 'item=' | cut -d'=' -f 2 | cut -d',' -f 1 > {MY_PATH}/RAW.log",

dag=test_dag

)

bash_extract_sum = BashOperator(

task_id='extract_SUM_log',

bash_command=f"cat {MY_PATH}/RAW.log | sort -n | uniq -c > {MY_PATH}/SUM.log",

dag=test_dag

)

bash_task_done = BashOperator(

task_id='DONE',

bash_command=f"touch {MY_PATH}/DONE",

dag=test_dag

)

bash_task_aws = BashOperator(

task_id='transfer_to_aws',

bash_command=f"""

aws s3 cp {MY_PATH}/RAW.log s3://log/sub/RAW.log

sleep 1

aws s3 cp {MY_PATH}/SUM.log s3://log/sub/SUM.log

sleep 1

aws s3 cp {MY_PATH}/DONE s3://log/sub/DONE

echo "END"

""",

dag=test_dag

)

start_task = EmptyOperator(task_id='start',dag=test_dag)

end_task = EmptyOperator(task_id='end',dag=test_dag)

start_task >>load_web_log >>bash_extract_raw >> bash_extract_sum >> bash_task_done

bash_task_done >> bash_task_aws >> end_task'📊 Data > Engineering' 카테고리의 다른 글

| [Airflow] Airflow Standalone 설치 및 테스트 (0) | 2023.05.26 |

|---|---|

| [Airflow/AWS] Airflow - Trigger Rule (0) | 2023.05.25 |

| [Airflow with Docker] Airflow 및 Docker 명령어 기초 (0) | 2023.05.23 |

| [LINUX] 기본 리눅스 명령어 실습2 (0) | 2023.05.19 |

| [LINUX] 기본 리눅스 명령어 실습 (0) | 2023.05.18 |