2023.05.26 (Fri) 학습정리

#Airflow #Standalone #backfill

1. Airflow standalone 설치

- 설치

export AIRFLOW_HOME=~/airflow

AIRFLOW_VERSION=2.6.1

# Extract the version of Python you have installed. If you're currently using Python 3.11 you may want to set this manually as noted above, Python 3.11 is not yet supported.

PYTHON_VERSION="$(python --version | cut -d " " -f 2 | cut -d "." -f 1-2)"

CONSTRAINT_URL="https://raw.githubusercontent.com/apache/airflow/constraints-${AIRFLOW_VERSION}/constraints-${PYTHON_VERSION}.txt"

# For example this would install 2.6.1 with python 3.7: https://raw.githubusercontent.com/apache/airflow/constraints-2.6.1/constraints-3.7.txt

pip install "apache-airflow==${AIRFLOW_VERSION}" --constraint "${CONSTRAINT_URL}"- 실행

export AIRFLOW_HOME=~/airflow #실행 시 마다 반복

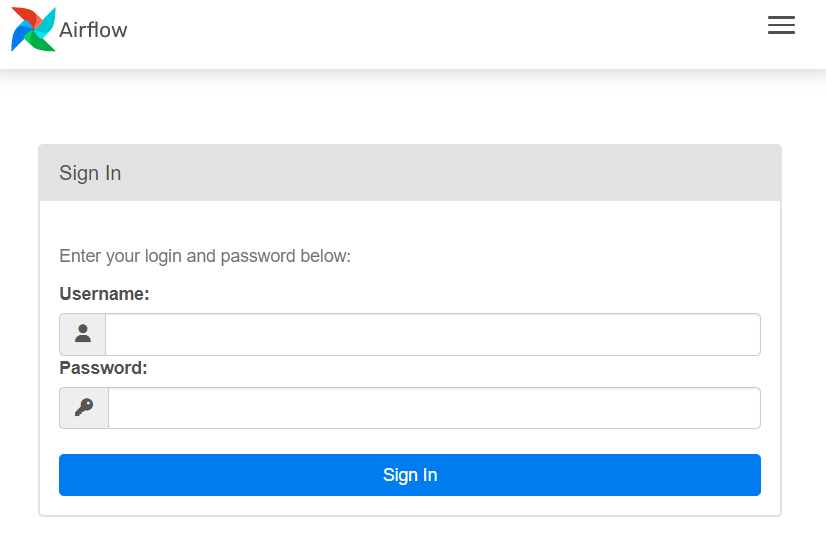

airflow standalone- Airflow UI 접속 - localhost:8080

접속 후, script에서 password를 찾아 로그인

standalone | Airflow is ready

standalone | Login with username: admin password: ************

standalone | Airflow Standalone is for development purposes only. Do not use this in production!또는, standalone_admin_password.txt 파일에서 password 확인

cd airflow

vi standalone_admin_password.txt- 환경 변수 설정

cd airflow

vi airflow.cfg

# airflow.cfg

# dags 경로 변경

# The folder where your airflow pipelines live, most likely a

# subfolder in a code repository. This path must be absolute.

dags_folder = /home/sub/dags

# dags example 삭제 : 사용자가 작성한 dags만 보여줌

# Whether to load the DAG examples that ship with Airflow. It's good to

# get started, but you probably want to set this to ``False`` in a production

# environment

load_examples = False2. Ariflow Test

2-1. 단일 task 인스턴스 실행

task 단위로 실제 인스턴스를 호출하여 테스트 수행

airflow dags list #dags 목록# run your first task instance

airflow tasks test [dag id] [task id] [실행날짜]2-2. backfill

Dag가 처리되는 시점보다 이전 시점부터의 데이터를 처리하고 싶을 때

- 과거 시점의 공백 데이터를 채우는 작업에 사용

# run a backfill over 2 days

airflow dags backfill [dag id] --start-date [start date] --end-date [end date]2-3. backfill 활용 예제

- dags 설명

1. 데이터 수집 간격 : 매일 새벽 5시(KST)

2. 아래 경로로 파티셔닝 하여 data 전송하는 코드 작성

- s3://pd24/<본인영어이름>/yyyyMMdd/SUM.log

- s3://pd24/<본인영어이름>/dd/MM/yyyy/RAW.log

- s3://pd24/<본인영어이름>/DONE/yyyyMMdd/_DONE

- 코드 예제

from airflow import DAG

from datetime import datetime, timedelta

from airflow.operators.bash import BashOperator

from airflow.operators.empty import EmptyOperator

from airflow.models.variable import Variable

import pendulum

MY_PATH = '/home/sub/airflow/data'

AWS_PATH = 's3://mypath'

local_tz = pendulum.timezone("Asia/Seoul")

exe_kr_nodash = '{{ execution_date.add(hours=9).strftime("%Y%m%d") }}'

year = '{{ execution_date.add(hours=9).strftime("%Y") }}'

month = '{{ execution_date.add(hours=9).strftime("%m") }}'

day = '{{ execution_date.add(hours=9).strftime("%d") }}'

default_args = {

'owner': 'airflow',

'depends_on_past': False,

'start_date': datetime(2023, 5, 30, tzinfo=local_tz),

'retries': 0,

}

test_dag = DAG(

'mario-sub-partitioning_ver2',

default_args=default_args,

schedule_interval="0 5 * * *"

)

# Define the BashOperator task

load_web_log = BashOperator(

task_id = 'load_web_log',

bash_command=f"""aws s3 cp {AWS_PATH}/web.log {MY_PATH}/web.log

echo "{exe_kr_nodash} {year} {month} {day}"

""",

dag=test_dag

)

extract_raw = BashOperator(

task_id='extract_RAW_log',

bash_command=f"cat {MY_PATH}/web.log | grep 'item=' | cut -d'=' -f 2 | cut -d',' -f 1 > {MY_PATH}/RAW.log",

dag=test_dag

)

extract_sum = BashOperator(

task_id='extract_SUM_log',

bash_command=f"cat {MY_PATH}/web.log | grep 'item=' | cut -d'=' -f 2 | cut -d',' -f 1 | sort -n | uniq -c > {MY_PATH}/SUM.log",

dag=test_dag

)

touch_done = BashOperator(

task_id='DONE',

bash_command=f"touch {MY_PATH}/DONE",

dag=test_dag

)

transfer_raw = BashOperator(

task_id='transfer_RAW_log',

bash_command=f"""

aws s3 cp {MY_PATH}/RAW.log {AWS_PATH}/sub/{exe_kr_nodash}/RAW.log

sleep 1

""",

dag=test_dag

)

transfer_sum = BashOperator(

task_id='transfer_SUM_log',

bash_command=f"""

aws s3 cp {MY_PATH}/SUM.log {AWS_PATH}/sub/{day}/{month}/{year}/SUM.log

sleep 1

""",

dag=test_dag

)

transfer_done = BashOperator(

task_id='transfer_DONE',

bash_command=f"""

aws s3 cp {MY_PATH}/DONE {AWS_PATH}/sub/DONE/{exe_kr_nodash}/_DONE

sleep 1

""",

dag=test_dag

)

start_task = EmptyOperator(task_id='start',dag=test_dag)

end_task = EmptyOperator(task_id='end',dag=test_dag)

start_task >>load_web_log >> [extract_raw, extract_sum] >> touch_done >> [transfer_raw , transfer_sum, transfer_done] >> end_task- backfill 실행 및 결과

airflow dags backfill mario-sub-partitioning_ver2 --start-date 2023-05-26 --end-date 2023-05-29$aws s3 ls mypath/sub/

# 2023-05-26 ~ 2023-05-29의 데이터

PRE 20230526/

PRE 20230527/

PRE 20230528/

PRE 20230529/

PRE 26/

PRE 27/

PRE 28/

PRE 29/

PRE DONE/'📊 Data > Engineering' 카테고리의 다른 글

| [Hive] Hive 설치 및 개요 (0) | 2023.05.31 |

|---|---|

| [Hadoop] Hadoop 설치 및 개요 (0) | 2023.05.30 |

| [Airflow/AWS] Airflow - Trigger Rule (0) | 2023.05.25 |

| [AWS] Airflow를 활용하여 AWS S3로 파일 전송하기 (0) | 2023.05.24 |

| [Airflow with Docker] Airflow 및 Docker 명령어 기초 (0) | 2023.05.23 |